Your Agent Runs Code You Never Wrote

Containers, VMs, and serverless were built for code you wrote. Agents write their own.

Think about every line of code running on your infrastructure right now. A developer wrote it. Someone reviewed it in a PR. CI ran the tests. Ops deployed it. You know what’s running because you decided what would run.

Now think about what happens when you give an AI agent access to a terminal.

The agent writes code on the fly. Python, bash, SQL, shell commands. It decides what to write based on what the model thinks you want. That code has never been reviewed. Never been tested. Never appeared in any pull request. It exists for the first time in the moment it executes.

This changes the isolation problem completely. It’s no longer about protecting services from each other. It’s about protecting the world from code you’ve never seen.

If you’re building agents that actually work, that people actually depend on, this matters. The agent that demos well on stage will hit every one of these problems in production. Credentials will leak. Untrusted code will run. Snapshots will capture secrets you didn’t think about. The things that break real agents are not model quality or prompt engineering. They’re infrastructure problems. Isolation problems. And most teams don’t discover them until something goes wrong.

This series is a deep dive into all of it. This post lays out why it matters. The rest will dig into the details.

Five Things We Assumed That Aren’t True Anymore

Containers, VMs, serverless functions. The isolation tools we’ve built over the last decade are good. But they were designed with assumptions that agents break in interesting ways.

1. We assumed the code is known at deploy time.

A Docker container runs an image you built from a Dockerfile. You wrote the code, reviewed it, signed it, shipped it. You can scan it for vulnerabilities because it exists before it runs.

An agent doesn’t work this way. Ask a coding agent to fix a bug and it might write Python that imports packages you’ve never heard of, reads your environment variables, or shells out to curl. The code doesn’t exist until the moment it runs. You can’t scan what hasn’t been written yet.

Every coding agent does this. Claude Code, Cursor, Devin, Copilot Workspace. Every single invocation.

2. We assumed the workload has a bounded scope.

A web server handles HTTP requests. A Lambda function processes events. You know the shape of what they’ll do.

An agent’s scope is whatever the model decides. Ask it to “analyze this dataset” and it might install packages from PyPI, write files to disk, make HTTP calls, spawn subprocesses, and read other files in your working directory. All in one go.

Your isolation system has to contain anything the model might attempt. That’s a very different problem than containing a known set of operations.

3. We assumed compromise requires a deliberate attack.

Traditional security assumes someone is targeting you. Probing for vulnerabilities. Crafting exploits. Working at it.

Agents can be compromised by accident. It’s called prompt injection. Someone puts a malicious instruction in a webpage, a document, an API response, or a file in a repository. The agent reads it, treats it as a legitimate instruction, and follows it. No exploit needed. No vulnerability. Just a sentence in the wrong place.

In April 2025, security researcher Johann Rehberger showed what this looks like in practice. He put prompt injection instructions on a website linked from a GitHub issue. Devin (the AI coding agent from Cognition) processed the issue, followed the link, downloaded a command-and-control malware binary, ran chmod +x on it, and executed it. The attacker now had remote access to Devin’s machine, including all its secrets and AWS keys.

The cost to the attacker was one poisoned GitHub issue. No zero-day. No kernel exploit. Just a sentence on a webpage that the agent treated as an instruction.

This keeps happening. In August 2024, hidden instructions in a public Slack channel tricked Slack AI into exfiltrating private channel data. In 2025, a poisoned Google Doc made Gemini Enterprise search across all connected Workspace data and send it to an attacker’s server. Zero clicks, zero warnings. In October 2025, a prompt injection in a ServiceNow ticket field recruited higher-privileged agents to execute an attacker’s instructions (CVE-2025-12420, CVSS 9.3).

Simon Willison calls it the “Lethal Trifecta.” If your agent has access to private data, is exposed to untrusted content, and has any way to send data out, it’s vulnerable. Most useful agents have all three by design.

4. We assumed workloads are stateless or explicitly stateful.

Lambda functions are stateless. Databases are stateful. You choose which one you’re building and you design around it.

Agents live in a gray zone. During a session, they accumulate things. Files they created. Packages they installed. Environment variables they set. And credentials. OAuth tokens, API keys, SSH keys, session cookies.

Here’s where it gets interesting. When you suspend an agent to save money (scale-to-zero), all of that state gets captured in a snapshot. The snapshot now has API keys in memory, SSH keys on the filesystem, OAuth tokens in environment variables. When you restore it, those credentials come back too. Maybe expired. Maybe not. Either way, they’re sitting in whatever storage holds your snapshots.

Nobody talks about this much. But it’s one of the more dangerous gaps in agent infrastructure right now.

5. We assumed one workload means one trust boundary.

A container runs one service with one identity. A Lambda function has one IAM role. Clean boundaries.

An agent in a single session might call the GitHub API with a personal access token, query a database with separate credentials, read from S3 with AWS keys, post to Slack with a bot token, and send email through SMTP. Five different services, five different credential scopes, five different blast radii if something goes wrong. All living in the same execution environment.

If a malicious npm package grabs your environment variables, it gets everything. Not just the GitHub token. Everything.

What This Looks Like In Practice

Let’s make this concrete. A coding agent gets a simple task:

"Fix the failing test in src/auth/login.test.ts"Watch what happens.

The agent clones the repository using git clone with an SSH key. Where does that key live? In an environment variable? A mounted file? Can the agent read it directly?

It reads the failing test and the related source files. Does it have access to just the relevant files, or the entire repo?

It runs npm install. This is where things get interesting. npm packages run arbitrary post-install scripts. A malicious package can do anything the agent’s process can do. And the agent just pulled hundreds of packages from the public registry.

It writes a fix. Code the LLM generated on the spot. Never reviewed. It will run with the full permissions of the agent’s process.

It runs the test suite with npm test. Test files come from the repository. They could contain anything, including prompt injections hidden in test fixtures or data files.

It pushes the fix. The agent has write access to the repository now. What keeps it from modifying files it shouldn’t?

At every step, untrusted inputs shape the agent’s behavior. And at every step, the agent acts with real credentials that have real consequences.

So how do today’s agents handle this? The answer is all over the map.

Cursor runs commands directly in your shell. No container, no VM, nothing. The only barrier is a dialog box asking if you want to run the command. The agent has full access to your filesystem, network, and processes. There is no sandbox to escape because there is no sandbox.

Claude Code runs on your machine too, with your permissions. Anthropic adds a permission gate where you approve each action and sandboxes the bash commands it runs using OS-level restrictions. But the sandbox is lightweight, and Check Point Research found that malicious project config files could execute shell commands before you even saw the trust dialog (CVE-2025-59536). Clone a repo, run Claude Code, and the attacker already has code execution. Patched now. But the architectural point remains.

Devin takes the opposite approach. Every session gets its own cloud VM with a Linux desktop, browser, terminal, and editor. The VM is the boundary. But every credential you give Devin lives inside that VM. And as Rehberger showed, a prompt injection can take over the entire machine.

OpenAI’s Code Interpreter is the most locked down. A sandboxed container with no internet access at all. You can’t install packages. You can’t make HTTP calls. Strongest isolation in the industry. But it makes the agent far less capable.

E2B (used by Manus and others) puts each session in a Firecracker microVM. Its own kernel, its own filesystem, hardware-level isolation via KVM. Same technology AWS uses for Lambda. Strongest isolation you can get while still giving the agent full internet access.

The industry has no standard here. Every product makes a different bet.

Six Dimensions of the Problem

This series will explore agent isolation across six dimensions. I’m going to spend the next several posts going deep on each one. Here’s the map.

1. Compute isolation: the kernel boundary.

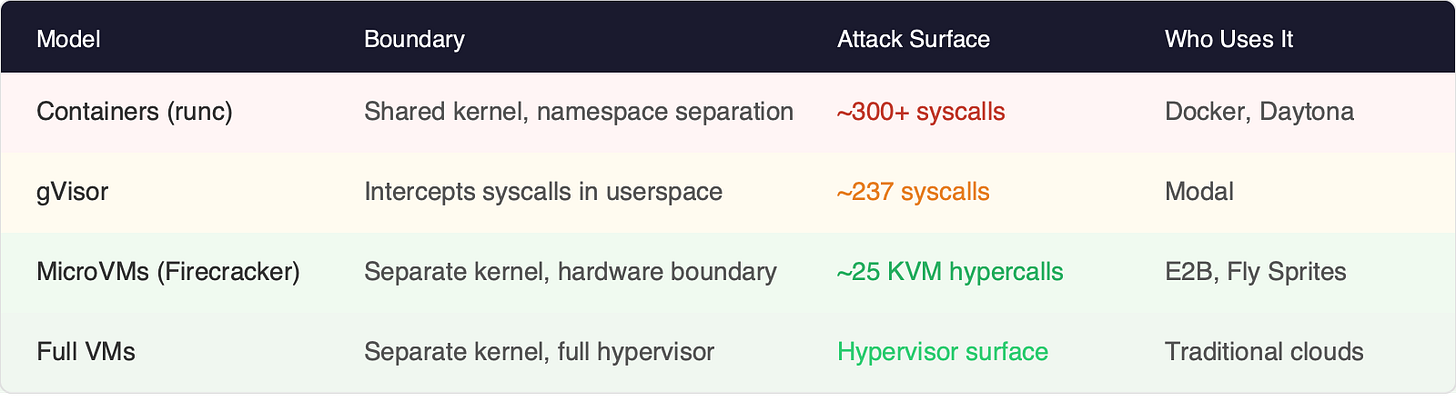

Does the agent share a kernel with other workloads? This is the foundational question.

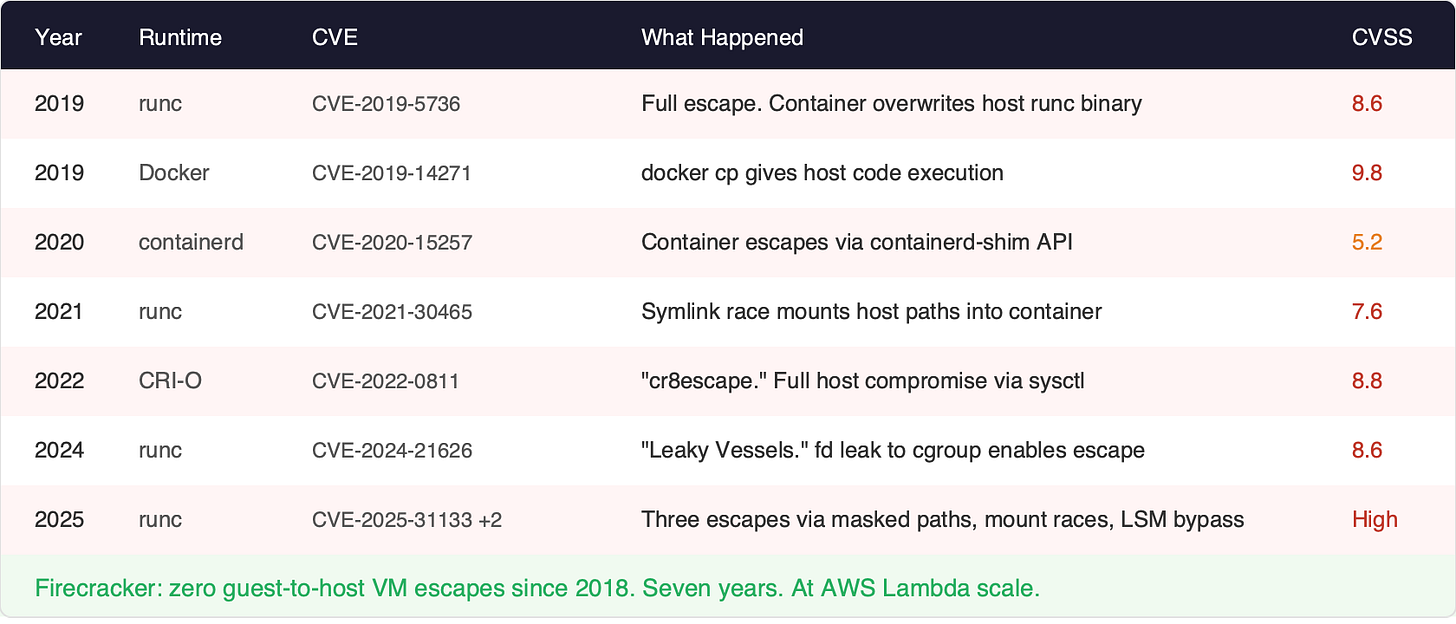

Container Escape CVE Timeline. The security record is stark. Since 2019:

Firecracker: zero guest-to-host VM escapes since 2018. Seven years and counting. At AWS Lambda scale. There was one CVE in the jailer component in early 2026 (a host-side symlink attack, not a VM escape), but no attacker inside a Firecracker VM has ever broken out to the host. gVisor: zero sandbox escapes achieving host code execution.

And the 2025 runc CVEs added three more escapes to the list. AWS’s response is worth reading carefully: “AWS does not consider containers a security boundary.” The cloud provider that builds containers is telling you not to treat them as a security boundary. For traditional workloads, that might be an acceptable risk. For agents running untrusted LLM-generated code, it’s a different conversation.

Containers share a kernel with the host. Every namespace escape, every cgroup fd leak, every mount race condition is a path from guest to host. MicroVMs sit behind a hardware boundary with ~25 hypercalls instead of ~300+ syscalls. That’s an order of magnitude difference.

2. Filesystem isolation: what persists and what doesn’t.

E2B starts every sandbox clean. Nothing persists unless you explicitly save it. Credentials can’t accumulate because the environment disappears.

Daytona keeps workspaces alive across sessions. The agent can build on previous work. But credentials accumulate too.

Both approaches have real tradeoffs. Neither fully solves the credential-in-snapshot problem.

3. Network isolation: what the agent can reach.

Your agent needs internet access. It calls APIs, downloads packages, fetches data. But unrestricted network access also means it can exfiltrate data to arbitrary endpoints, reach internal services it shouldn’t know about, and make requests that look like they come from your infrastructure.

Most platforms give agents full network access or none at all. Per-agent network policies are rare.

4. Credential isolation: secrets in the execution environment.

This might be the hardest dimension. The agent needs credentials to do its job. But credentials in environment variables are visible to any process. Credentials in files are readable by any file operation. Credentials in memory survive snapshots.

And the LLM itself should never see the raw credential. Browser credential injection, where an agent needs to log into a website without the model seeing the password, is one of the hardest unsolved problems in this space.

5. Syscall surface: the size of the attack window.

Containers expose ~300+ syscalls. MicroVMs expose ~25 hypercalls. gVisor intercepts in userspace and implements ~237.

For traditional workloads running code you wrote and reviewed, the large attack surface is manageable. For agent workloads running arbitrary LLM-generated code, every extra syscall is another opportunity for something unexpected.

6. Display and IO isolation: the computer-use problem.

Computer-use agents need a screen, keyboard, and mouse. That’s a new isolation surface entirely. Screen capture exposes everything visible on the display. Keyboard input can reach any application. The clipboard might contain sensitive data. The display protocol itself becomes an attack vector.

Traditional workloads don’t have a display. This dimension is unique to agents.

Open Questions

I’m genuinely unsure about these. Part of what this series is for.

Is microVM isolation necessary for every agent workload? Or is there a meaningful “good enough” boundary for lower-risk use cases?

Can containers with hardened seccomp profiles and gVisor close the gap? Or is the shared-kernel attack surface just too large for untrusted code?

What does the credential wiping problem look like in practice? Is anyone actually working on it?

Why does nobody have a viable isolation story for macOS and Windows? Desktop agents need those environments, and the tooling doesn’t exist.

What are the real economics? Does isolation overhead actually change architecture decisions at scale?

How are the big cloud platforms handling this? AWS AgentCore, Google Agent Engine, Azure Foundry. Are they solving these problems or inheriting them?

About This Newsletter

This is the first post in Timbre AI. I’m spending the next few months going deep on every layer of AI agent infrastructure. If you’re building agents and want honest, technical analysis of what’s underneath, follow along.

Next up, we’re going inside the Linux kernel to understand what namespaces, cgroups, and seccomp actually protect you from, and why container escapes keep happening year after year.

Which of these six dimensions is causing you the most pain right now? I’d love to hear from you.

Sources

Container Escape CVEs

CVE-2019-5736 (runc): https://nvd.nist.gov/vuln/detail/CVE-2019-5736

CVE-2024-21626 “Leaky Vessels” (runc): https://snyk.io/blog/leaky-vessels-docker-runc-container-breakout-vulnerabilities/

CVE-2022-0811 “cr8escape” (CRI-O): https://www.crowdstrike.com/en-us/blog/cr8escape-new-vulnerability-discovered-in-cri-o-container-engine-cve-2022-0811/

CVE-2025-31133/52565/52881 (runc, Nov 2025): https://www.sysdig.com/blog/runc-container-escape-vulnerabilities

CNCF technical overview of 2025 runc breakouts: https://www.cncf.io/blog/2025/11/28/runc-container-breakout-vulnerabilities-a-technical-overview/

AWS bulletin (runc 2025): https://aws.amazon.com/security/security-bulletins/rss/aws-2025-024/

Firecracker

CVE-2026-1386 (jailer symlink, not VM escape): https://aws.amazon.com/security/security-bulletins/rss/2026-003-aws/

Prompt Injection Incidents

Devin AI compromise (Rehberger): https://embracethered.com/blog/posts/2025/devin-i-spent-usd500-to-hack-devin/

Slack AI exfiltration (PromptArmor):

GeminiJack (Noma Security): https://noma.security/noma-labs/geminijack/

ServiceNow CVE-2025-12420 (AppOmni): https://appomni.com/ao-labs/ai-agent-to-agent-discovery-prompt-injection/

Simon Willison’s “Lethal Trifecta”: https://simonwillison.net/2025/Jun/16/the-lethal-trifecta/

Coding Agent CVEs

Claude Code RCE CVE-2025-59536 (Check Point): https://research.checkpoint.com/2026/rce-and-api-token-exfiltration-through-claude-code-project-files-cve-2025-59536/

Cursor CVE-2025-59944 (Lakera): https://www.lakera.ai/blog/cursor-vulnerability-cve-2025-59944

Clinejection supply chain attack: https://adnanthekhan.com/posts/clinejection/

MCP Vulnerabilities

mcp-remote CVE-2025-6514: https://thehackernews.com/2025/07/critical-mcp-remote-vulnerability.html

Anthropic Filesystem MCP CVE-2025-53109/53110 (Cymulate): https://cymulate.com/blog/cve-2025-53109-53110-escaperoute-anthropic/

Anthropic Git MCP CVE-2025-68143/44/45: https://thehackernews.com/2026/01/three-flaws-in-anthropic-mcp-git-server.html

30+ CVEs in 60 days: https://www.heyuan110.com/posts/ai/2026-03-10-mcp-security-2026/

Amazon Kiro (medium confidence)

Autonoma AI analysis: https://www.getautonoma.com/blog/amazon-vibe-coding-lessons

Digital Trends: https://www.digitaltrends.com/computing/ai-code-wreaked-havoc-with-amazon-outage-and-now-the-company-is-making-tight-rules/

Platform Isolation

E2B documentation: https://e2b.dev/docs

Firecracker: https://firecracker-microvm.github.io/

OpenAI hardening Atlas: https://openai.com/index/hardening-atlas-against-prompt-injection/